The Rise of AI-Driven Real Estate Analytics: How Predictive Models Are Transforming Asset Valuation

The real estate industry, long characterized by gut instinct and relationship-driven deals, is undergoing a seismic transformation. Artificial intelligence and machine learning algorithms are no longer experimental tools confined to Silicon Valley startups—they’ve become essential infrastructure for property valuations, investment decisions, and urban planning across the globe.

From Manhattan office towers to suburban housing developments in Phoenix, AI-driven analytics platforms are processing millions of data points per second, generating price predictions that increasingly outperform traditional appraisal methods. This shift represents more than technological novelty; it’s a fundamental reimagining of how we understand and value one of humanity’s oldest asset classes.

The Algorithmic Revolution in Property Valuation

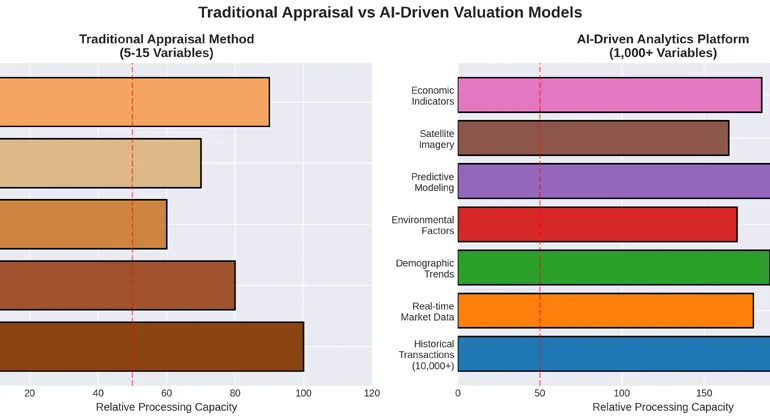

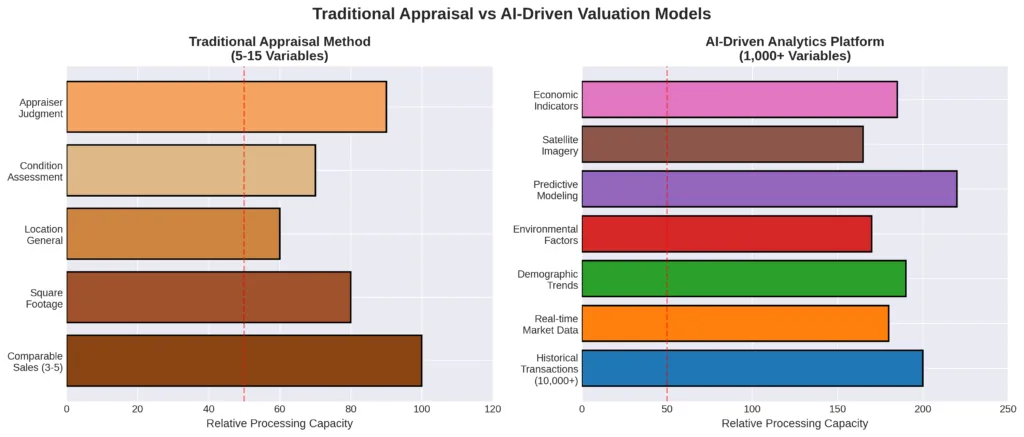

Traditional real estate appraisal has relied on the “comparable sales approach” for decades—a human appraiser examines recent sales of similar properties, adjusts for differences, and arrives at a valuation. While time-tested, this method is inherently limited by the appraiser’s knowledge, available comparables, and subjective judgment.

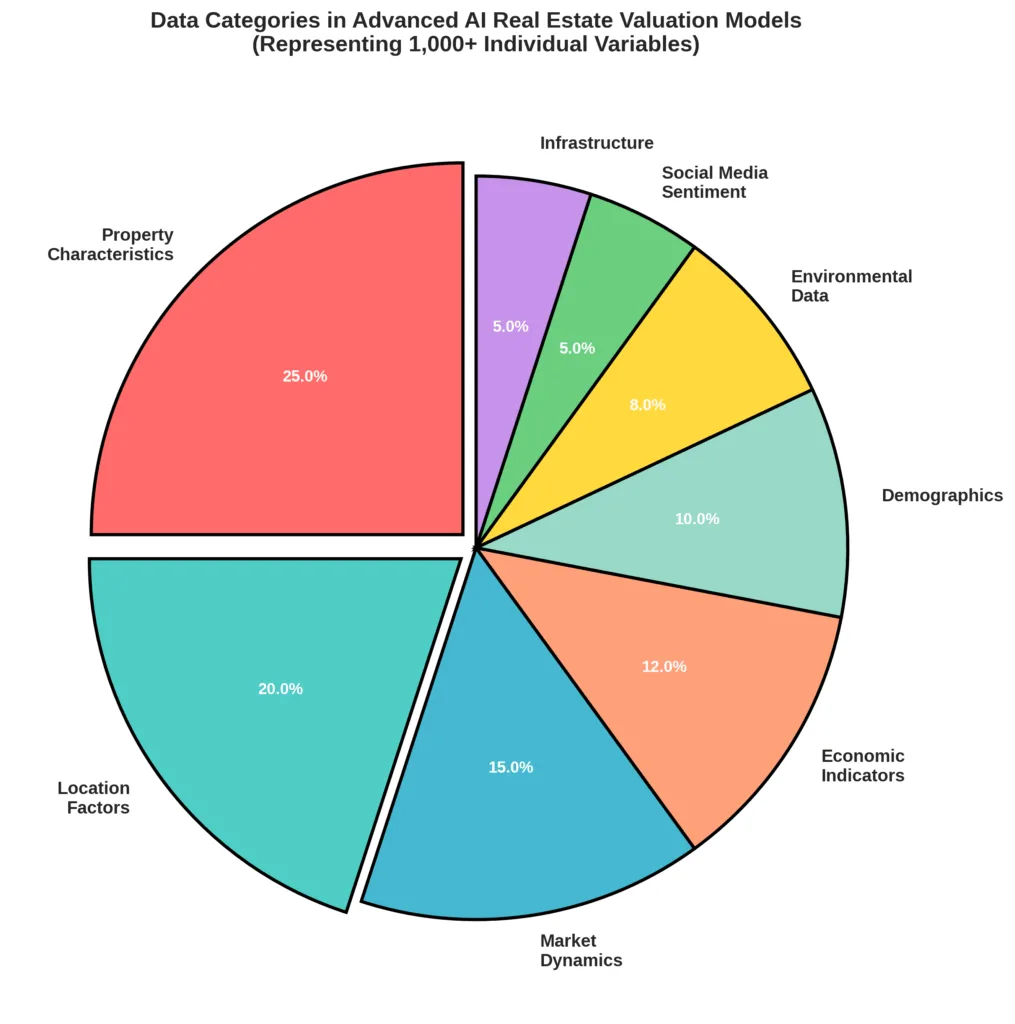

Enter machine learning models trained on comprehensive datasets. Modern AI systems can analyze thousands of variables simultaneously: not just square footage and bedroom count, but proximity to transit hubs, school district performance trends, local crime statistics, employment data, interest rate movements, demographic shifts, social media sentiment about neighborhoods, satellite imagery showing development patterns, and even the linguistic characteristics of property listings.

“We’re seeing prediction accuracy rates of 95 percent or higher in some markets,” explains Dr. Sarah Chen, Chief Data Scientist at Quantrix Analytics, a Boston-based PropTech firm. “The AI doesn’t just look at what a property is today—it models what the surrounding environment will likely become over the next five to ten years.”

This predictive power has profound implications. Institutional investors can identify undervalued assets before market sentiment shifts. Municipalities can forecast tax revenue with unprecedented precision. Developers can model demand scenarios before breaking ground. Individual homeowners can time their sales based on hyper-local market dynamics that would be invisible to the naked eye.

PropTech’s American Frontier: Case Studies from the United States

The United States, with its fragmented real estate markets and wealth of available data, has become a laboratory for AI-driven valuation platforms.

Zillow’s Zestimate Evolution

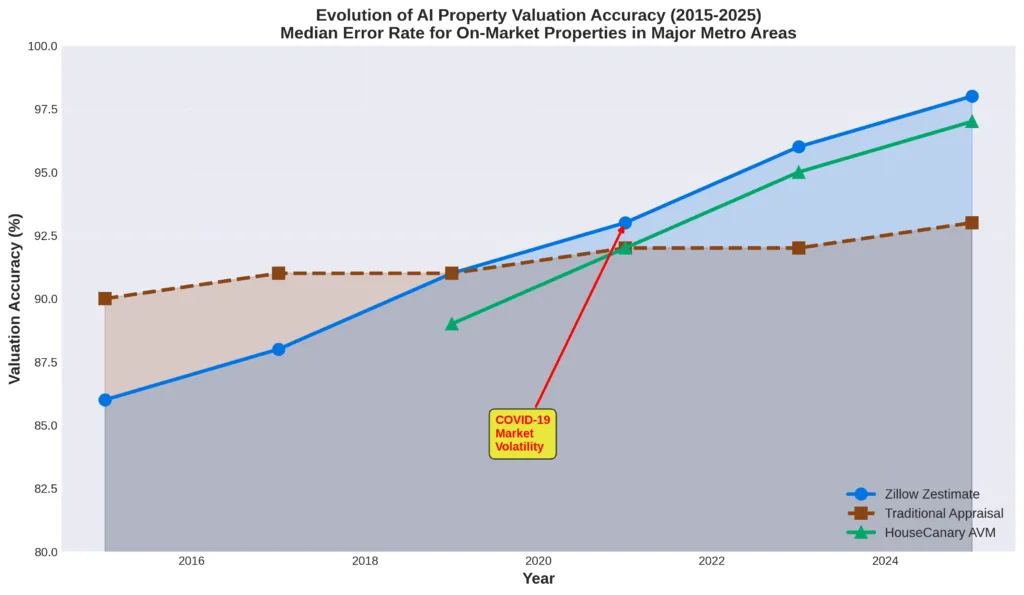

Zillow’s Zestimate, perhaps the most recognizable AI valuation tool, has evolved dramatically since its 2006 launch. The company’s neural networks now process over 7.5 million statistical and machine learning models across different geographic areas. While early versions struggled with accuracy, recent iterations incorporate computer vision analysis of property photos, natural language processing of listing descriptions, and real-time market sentiment indicators scraped from consumer behavior on the platform itself.

The Zestimate’s median error rate has dropped from 14 percent in 2006 to under 2 percent in 2025 for on-market properties in major metropolitan areas—a level of precision that rivals professional appraisals at a fraction of the cost and time.

However, Zillow’s well-publicized 2021 exit from the iBuying business—where it used AI to purchase homes directly—serves as a cautionary tale. The company lost over $500 million when its algorithms failed to account for the unprecedented volatility of pandemic-era housing markets. The lesson was clear: AI excels at pattern recognition within stable parameters but can struggle when faced with genuinely novel conditions.

Cherre: The Data Fabric Connecting Fragmented Markets

While consumer-facing platforms like Zillow generate headlines, enterprise PropTech solutions are quietly reshaping institutional real estate. Cherre, a New York-based data platform, exemplifies this trend. Rather than building its own valuation models, Cherre aggregates and standardizes data from hundreds of disparate sources—tax records, rental listings, construction permits, environmental reports, demographic surveys, economic indicators—creating what CEO L.D. Salmanson calls “a single source of truth” for commercial real estate.

Major institutional investors including Blackstone, Brookfield, and Morgan Stanley use Cherre’s platform to feed their proprietary AI models. The result is investment decisions made in days rather than months, with risk assessments incorporating variables that human analysts might overlook.

“A decade ago, a commercial real estate investment committee might review twenty data points before approving an acquisition,” Salmanson notes. “Today, our clients are analyzing twenty thousand variables. The human role hasn’t disappeared—it’s elevated to pattern synthesis and strategic judgment.”

HouseCanary: Democratizing Valuation Models

San Francisco-based HouseCanary takes a different approach, offering white-label AI valuation tools to mortgage lenders, appraisers, and government agencies. Their Collateral Valuation Intelligence platform generates property valuations in seconds, complete with confidence intervals and explanations of key price drivers.

What distinguishes HouseCanary is transparency. Unlike “black box” AI systems, their models provide detailed breakdowns of how each variable influenced the final valuation. This interpretability is crucial for regulatory compliance—mortgage lenders must be able to explain their underwriting decisions to federal regulators, a requirement that opaque neural networks struggle to satisfy.

The company’s Automated Valuation Model (AVM) is now approved by Fannie Mae and Freddie Mac as a supplement to traditional appraisals, a watershed moment for AI acceptance in heavily regulated financial markets.

European Sophistication: A Continental Perspective

European PropTech has evolved along a somewhat different trajectory, shaped by stronger data privacy regulations, more centralized land registries, and deeper cultural skepticism toward algorithmic decision-making.

Spacemaker: AI-Driven Urban Planning in Scandinavia

Norwegian company Spacemaker, acquired by Autodesk in 2020, demonstrates how AI can optimize real estate before a single brick is laid. Their platform uses generative design algorithms to evaluate millions of potential site configurations, optimizing for daylight exposure, noise levels, parking efficiency, environmental impact, and construction costs simultaneously.

Stockholm-based developer Besqab used Spacemaker to redesign a residential project in central Stockholm, increasing the number of units by 18 percent while improving average daylight hours per apartment by 23 percent—a combination that traditional planning methods would struggle to achieve.

“In Scandinavia, we have strong requirements for environmental sustainability and livability,” explains Håvard Haukeland, Spacemaker’s founder. “AI doesn’t replace architects—it expands the solution space they can explore, finding optimizations that human intuition might miss.”

Catella: Predictive Analytics for Commercial Real Estate

Swedish firm Catella operates one of Europe’s most sophisticated real estate research platforms, combining traditional market analysis with machine learning forecasts. Their PropTech division has developed AI models that predict rental price movements, vacancy rates, and investment yields across major European cities with quarterly updates.

Critically, Catella’s approach emphasizes human-AI collaboration. Their research reports clearly delineate which insights derive from algorithmic analysis versus expert interpretation. This hybrid methodology has proven persuasive to European institutional investors who remain cautious about fully automated decision-making.

During the 2023 commercial real estate downturn, Catella’s AI models identified emerging stress in German office markets six months before conventional analyses reached similar conclusions, allowing their clients to reposition portfolios proactively.

PriceHubble: Swiss Precision Meets AI Valuation

Zürich-based PriceHubble offers AI-powered property valuations across 15 European countries, processing data in multiple languages and regulatory frameworks. Their platform addresses a uniquely European challenge: cross-border investment analysis where legal systems, taxation regimes, and market conventions vary dramatically.

PriceHubble’s models incorporate country-specific variables—such as German energy efficiency certificates, French rental yield calculations, and UK leasehold structures—that generic AI systems would miss. This localization, combined with the Swiss reputation for precision, has made PriceHubble the valuation tool of choice for pan-European real estate portfolios.

The Integration Challenge: AI Meets Traditional Appraisal

The rise of AI analytics hasn’t eliminated traditional appraisal and research—it’s forcing a sometimes uncomfortable integration.

The Appraiser’s Evolving Role

Many professional appraisers initially viewed AI as an existential threat. The reality has proven more nuanced. Routine residential appraisals for conventional mortgages are indeed increasingly automated, with AI-generated valuations supplemented by brief property condition reports from field inspectors rather than full appraisals.

However, complex properties—unique commercial buildings, historic homes, properties in thin markets with few comparables, or assets requiring specialized knowledge—still require human expertise. The appraiser’s role is evolving from data gatherer to AI supervisor and exception handler.

“I spend far less time measuring square footage and far more time questioning whether the AI’s assumptions make sense for this specific property,” says Michael Torres, a certified appraiser in Austin, Texas. “When the algorithm produces an unexpectedly high valuation, is it identifying genuine value that comparable sales miss, or is it overfitting to irrelevant variables? That judgment requires human expertise.”

Progressive appraisal firms are positioning themselves as AI-augmented advisors. They use algorithmic tools for initial valuations, then apply professional judgment to validate, adjust, or challenge the results. This hybrid approach often produces better outcomes than either method alone.

Research Departments Rethink Their Mission

The research departments of major real estate firms face similar adaptation pressures. Producing quarterly market reports summarizing recent transactions and cap rate movements—once a core function—adds limited value when anyone can query an AI for the same information in seconds.

Forward-thinking research teams are pivoting toward questions that AI handles poorly: interpreting policy changes, forecasting disruptions from new technologies, understanding shifting cultural preferences, and synthesizing insights across multiple markets and asset classes.

“AI is excellent at extrapolating from existing patterns,” observes James Whitmore, Head of Research at a London-based real estate investment trust. “It’s terrible at anticipating paradigm shifts—the impact of remote work on office demand, how climate migration might reshape sunbelt housing markets, what emerging retail formats mean for shopping centers. Those require human imagination informed by data, not data alone.”

The most effective research operations now feature data scientists working alongside economists, urban planners, and industry veterans—interdisciplinary teams that combine algorithmic power with contextual understanding.

Implementation Strategies: What Organizations Should Consider

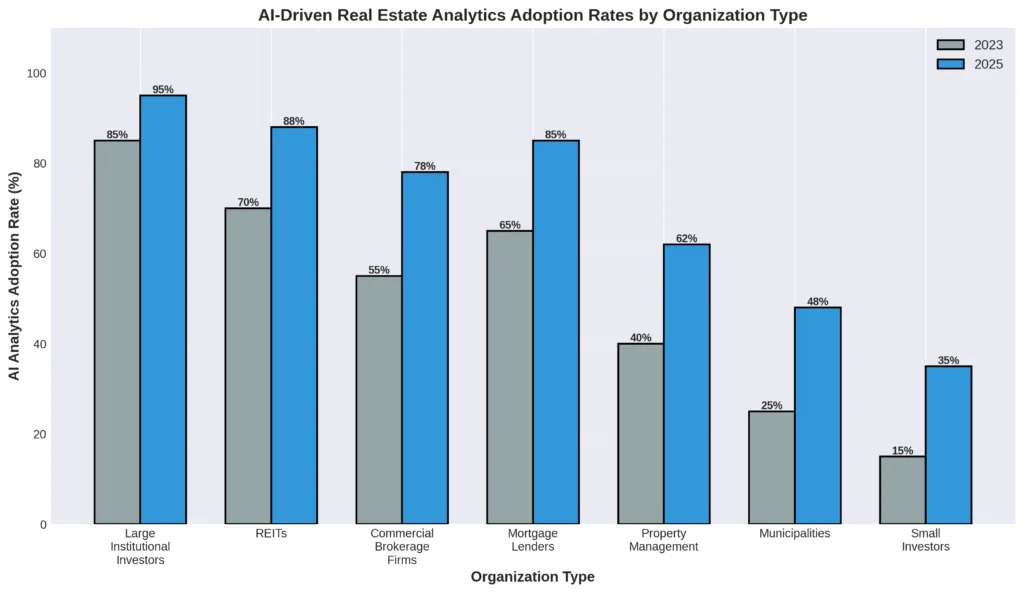

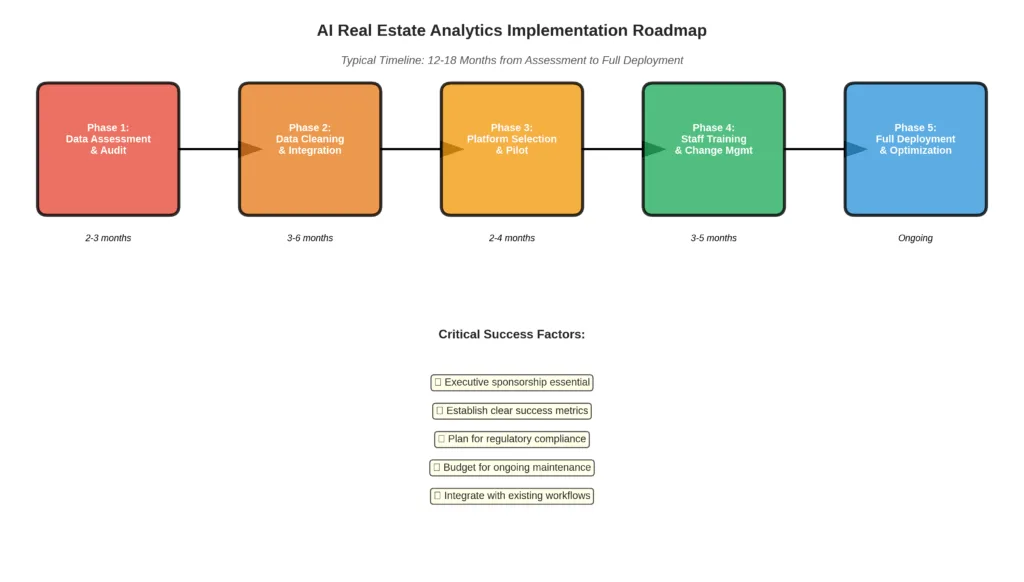

For corporations and municipalities considering AI analytics adoption, several critical factors warrant attention.

Data Infrastructure Comes First

The most sophisticated algorithms are worthless without quality data. Organizations must first audit their data environment: What information do we already collect? How is it stored and structured? What gaps exist in our datasets?

Many implementation failures stem from trying to deploy advanced AI before establishing basic data hygiene. Property records with inconsistent address formats, transaction histories missing key details, or maintenance records stored in incompatible systems will undermine any analytical platform.

“Clients often want to jump straight to predictive modeling,” notes Dr. Chen from Quantrix Analytics. “We typically spend the first six months just cleaning and structuring their data. It’s not glamorous, but it’s essential.”

Build Versus Buy Decisions

Organizations face a fundamental choice: develop proprietary AI models in-house or license commercial platforms.

Building custom models offers potential competitive advantages—algorithms trained on proprietary data that competitors can’t access, models optimized for specific investment strategies or geographic focuses. However, this requires substantial investment in data science talent, computational infrastructure, and ongoing maintenance.

For most organizations outside specialized PropTech firms or major institutional investors, commercial platforms offer better risk-adjusted returns. The AI valuation problem is largely solved at the technical level; the challenge is applying it effectively within specific business contexts.

A hybrid approach often works best: use commercial platforms for standardized analytics while developing proprietary models for genuine competitive differentiators.

Regulatory Compliance and Explainability

Algorithmic decision-making attracts regulatory scrutiny, particularly in housing markets where fair lending laws prohibit discrimination. AI models must be auditable, with clear documentation of how variables are weighted and decisions reached.

The European Union’s AI Act, implemented in 2024, classifies property valuation systems as “high-risk” applications requiring conformity assessments, human oversight, and bias testing. United States regulators are moving in similar directions, with the Federal Housing Finance Agency issuing detailed guidance on AVM usage in mortgage underwriting.

Organizations deploying AI analytics should engage compliance specialists early in the process, ensuring that models meet both current regulations and probable future requirements.

Change Management and Organizational Culture

Technology adoption ultimately hinges on people. Introducing AI analytics often threatens existing workflows, skill sets, and power structures within organizations.

Successful implementations involve extensive stakeholder engagement. Investment committees need training on how to interpret AI-generated insights and when to override algorithmic recommendations. Junior analysts require new skills around data preparation and model validation rather than Excel-based financial modeling. Senior executives must learn to trust (or productively question) probabilistic forecasts rather than the false precision of traditional discounted cash flow models.

“The hardest part isn’t the technology—it’s getting a 30-year veteran investor to accept that an algorithm might identify opportunities he’d overlook,” admits Marcus Thompson, Chief Investment Officer at a mid-sized REIT. “We’ve found that transparency helps. When the model explains its reasoning in terms that experienced professionals recognize, adoption becomes easier.”

The Municipal Advantage: AI for Public Sector Real Estate

City and county governments represent an underappreciated frontier for AI real estate analytics, with applications extending beyond property tax assessment.

Predictive Tax Revenue Modeling

Municipal budgets depend heavily on property tax receipts, making accurate valuation forecasting critical. Traditional assessment cycles—often conducted every few years—create significant lag between market movements and tax base adjustments.

Phoenix, Arizona has implemented an AI system that continuously updates property valuations based on market transactions, building permits, demographic shifts, and economic indicators. The city’s finance department now projects tax revenues 18 months out with 93 percent accuracy, enabling more confident budget planning and bond issuance.

Affordable Housing Optimization

Cities struggling with housing affordability are using AI to identify optimal locations and strategies for subsidized development. New York City’s Department of Housing Preservation and Development employs machine learning models that analyze transit access, job center proximity, school quality, existing affordable housing concentrations, and development cost factors to prioritize sites for publicly-supported projects.

The models also predict gentrification risk, helping the city implement preservation measures in neighborhoods where market pressures threaten existing affordable stock. While controversial—some critics argue that predictive models can become self-fulfilling prophecies—these tools offer transparency that political decision-making often lacks.

Climate Adaptation and Infrastructure Planning

Forward-looking municipalities are integrating climate risk into property valuation models. Miami-Dade County uses AI to predict how sea-level rise, flood risk, and hurricane exposure will affect property values across different timeframes, informing both building code updates and long-term infrastructure investment.

These models incorporate data from climate science, insurance actuarial tables, and observed market behavior in climate-affected areas. The goal isn’t perfect prediction—climate futures remain uncertain—but rather scenario planning that helps the county prepare for plausible outcomes.

Limitations, Risks, and the Road Ahead

Despite remarkable progress, AI-driven real estate analytics face significant limitations that warrant acknowledgment.

The Data Inequality Problem

AI models perform best in markets with abundant, high-quality data—typically affluent urban areas with frequent transactions and robust record-keeping. They struggle in rural markets, disadvantaged neighborhoods, or emerging economies where data is sparse or unreliable.

This creates a paradox: the communities that might benefit most from improved valuation accuracy—those suffering from historical underinvestment and information asymmetries—are precisely where algorithms perform worst. There’s a risk that AI analytics could widen rather than narrow the information gap between sophisticated and unsophisticated market participants.

Black Swan Events and Model Fragility

The COVID-19 pandemic demonstrated that even sophisticated AI models can fail during genuine disruptions. Algorithms trained on historical patterns had no framework for understanding how a global pandemic would affect office demand, retail spaces, or housing preferences.

While models are being updated with pandemic-era data, this raises an uncomfortable question: Are we simply training AI to fight the last war? The next disruption—whether climate-related, technological, or geopolitical—may look nothing like past crises.

The Amplification Risk

When many market participants rely on similar AI models, there’s a danger of herding behavior and reduced market efficiency. If every institutional investor’s algorithm identifies the same undervalued asset class, the resulting capital flood eliminates the opportunity everyone rushed to exploit.

More insidiously, AI recommendations can become self-fulfilling. If models predict certain neighborhoods will appreciate rapidly and investors respond by purchasing aggressively, the resulting demand drives the predicted appreciation—not because the models identified genuine value, but because collective action created it.

Privacy and Surveillance Concerns

Advanced AI models increasingly incorporate granular personal data—commuting patterns inferred from mobile phones, consumption behavior from credit cards, social network connections from digital platforms. This raises profound privacy questions: Should your property value depend on your personal characteristics or behaviors? Where is the line between legitimate market analysis and invasive surveillance?

European data protection regulations provide some guardrails, but the technological capability for hyper-personalized property valuation exists and will be tempting to deploy in less-regulated environments.

A New Equilibrium

The integration of AI into real estate analytics represents not a disruption but a transition—from an industry relying almost exclusively on human judgment informed by limited data, to one where algorithmic analysis and human expertise form a symbiotic partnership.

The most successful organizations in 2025 are those that recognize AI’s strengths and limitations. Algorithms excel at processing vast information, identifying subtle patterns, and generating consistent valuations at scale. Humans provide contextual understanding, ethical judgment, and the ability to reason through unprecedented situations.

Neither is sufficient alone. The appraiser who ignores AI tools will find themselves outcompeted by augmented peers. The investor who blindly follows algorithmic recommendations without critical assessment will eventually face a reckoning when models fail.

For New York Times readers contemplating this landscape—whether as homeowners wondering about property values, investors evaluating opportunities, or citizens concerned about urban development—the key insight is that real estate has joined finance, medicine, and transportation in becoming a fundamentally data-driven domain. The “market” increasingly means not just human buyers and sellers, but the algorithmic intermediaries shaping their perceptions and decisions.

As with previous technological revolutions, this one will create winners and losers, efficiencies and excesses, unprecedented insights and novel risks. The built environment that shapes our daily lives—where we live, work, and gather—is being reimagined through billions of calculations per second. Understanding that transformation is no longer optional for anyone who cares about cities, communities, and the spaces we inhabit.

The algorithms are already here. The question now is how wisely we deploy them.

The SJ Global Insights team specializes in the intersection of technology, real estate, and urban development. For more analysis on PropTech trends, visit sjglobal.org/insights

Related Reading: